Specify the deadletter queue created in the step above.A sample policy can be found in the Appendix: Sample Queue Policy Attach a queue policy that enables the S3 bucket sqs:sendmessage permissions.Primary QueuesĬreate one queue per data type. In the demo video, this queue was named cbc-demo-queue-deadletter.

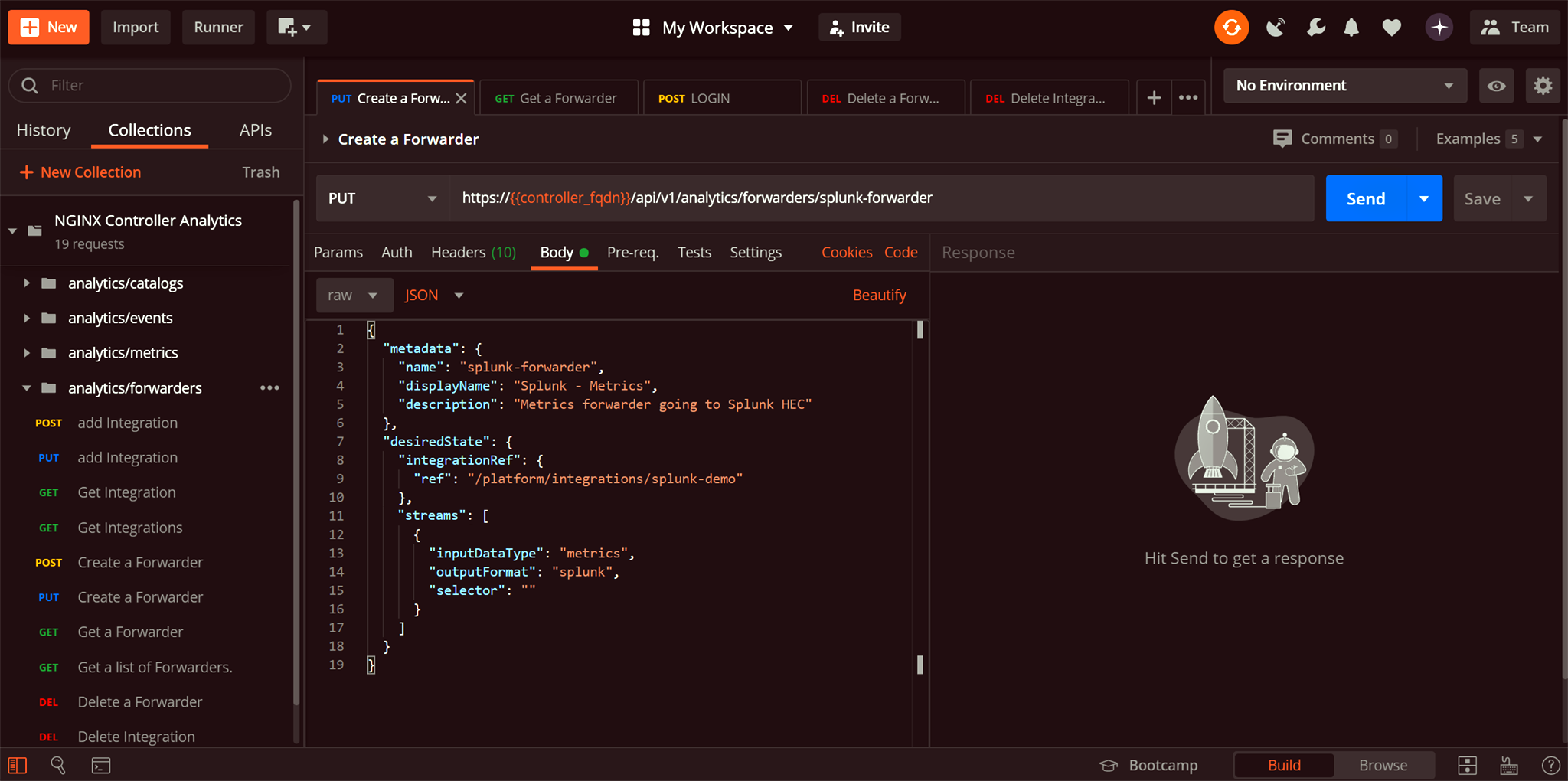

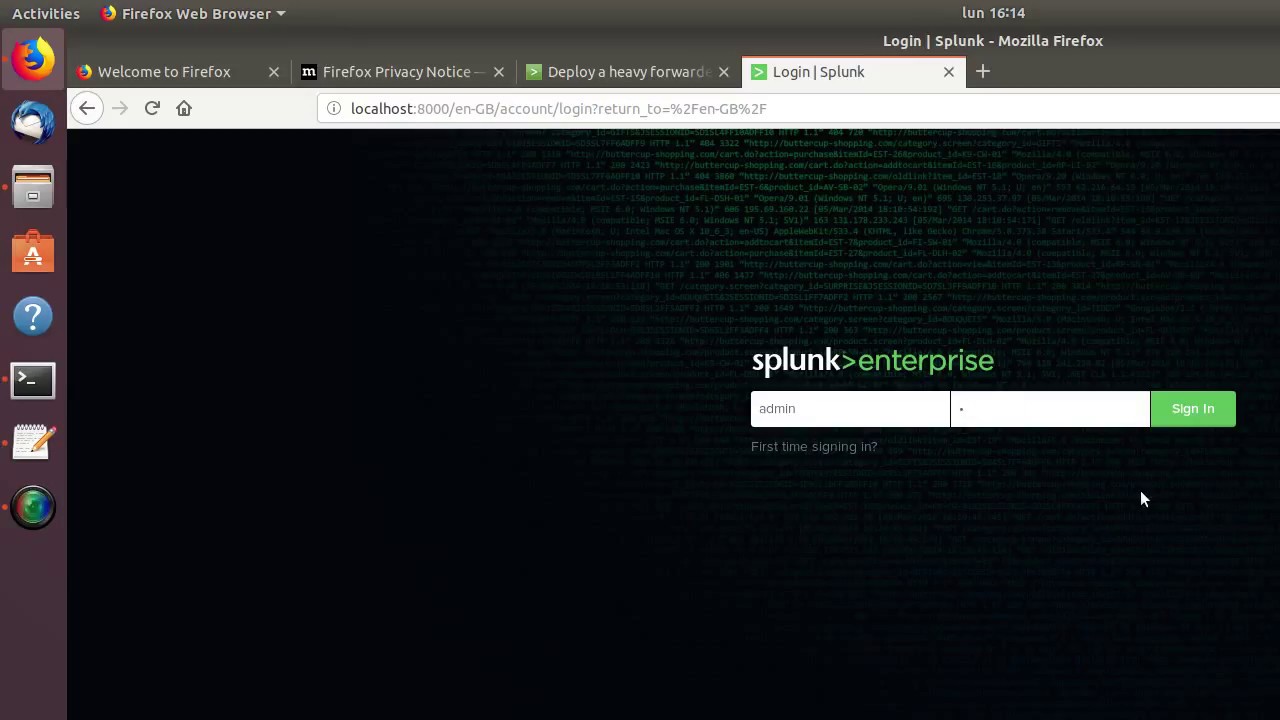

Most SQS consumers require a deadletter queue, essentially a place the consumer can dump bad or malformed messages from the primary queues if something goes wrong to avoid data loss or reprocessing bad data. See the Appendix: Sample Policy for KMS Encryption for additional details and examples. This requires granting additional permissions to allow Carbon Black Cloud's principal to access the key. KMS Encryption: The Carbon Black Cloud Data Forwarder now supports KMS Encryption (Symmetric keys only). Each type should get its own forwarder, its own prefix (directory) in the S3 bucket, its own SQS queue, its own Splunk input, and its own Splunk Source Type. The native input works well for lower-volume data sets but if you're an enterprise SOC where scale and reliability is critical, the data forwarder is our recommended solution.Ĭarbon Black Cloud currently offers three data types in the Data Forwarder. You configure the app with a Carbon Black Cloud API key, and it does the rest. Our Carbon Black Cloud Splunk App offers native inputs for data sets Alerts, Audit Logs, Live Query Results, and Vulnerabilities. If your organization has high-volume alerts, or you're looking to bring the visibility that Watchlist Hits and Endpoint Events provide into Splunk, the Data Forwarder is your solution. From a command or shell prompt, navigate to the $SPLUNK_HOME/bin/ directory.The Data Forwarder was built for low-latency data streaming, reliably, at scale.With the CLI, enable forwarding on the Splunk Enterprise instance as follows, then configure forwarding to a specified receiver. You must perform any further configuration of forwarding while indexing in the nf file. Select Yes to store and maintain a local copy of the indexed data on the forwarder.If you want to store data on the forwarder, you must enable that capability, either as described in "Set up heavy forwarding with Splunk Web" earlier in this topic, or by editing the nf configuration file, which controls forwarding outputs. To implement load-balanced forwarding, you can enter multiple hosts as a comma-separated list.Ĭonfigure heavy forwarders to index and forward dataĪ heavy forwarder has an advantage over light and universal forwarders in that it can index your data locally, as well as forward the data to another index. Enter the host name or IP address for the receiving Splunk instance, along with the receiving port that you specified when you configured the receiver.Select Add new at Configure forwarding.Select Settings > Forwarding and receiving.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed